[ad_1]

On this weblog, I am going to describe how we use RocksDB at Rockset and the way we tuned it to get probably the most efficiency out of it. I assume that the reader is usually conversant in how Log-Structured Merge tree primarily based storage engines like RocksDB work.

At Rockset, we would like our customers to have the ability to constantly ingest their information into Rockset with sub-second write latency and question it in 10s of milliseconds. For this, we’d like a storage engine that may help each quick on-line writes and quick reads. RocksDB is a high-performance storage engine that’s constructed to help such workloads. RocksDB is utilized in manufacturing at Fb, LinkedIn, Uber and plenty of different corporations. Tasks like MongoRocks, Rocksandra, MyRocks and so forth. used RocksDB as a storage engine for current standard databases and have been profitable at considerably decreasing area amplification and/or write latencies. RocksDB’s key-value mannequin can be best suited for implementing converged indexing, the place every subject in an enter doc is saved in a row-based retailer, column-based retailer, and a search index. So we determined to make use of RocksDB as our storage engine. We’re fortunate to have vital experience on RocksDB in our staff within the type of our CTO Dhruba Borthakur who based RocksDB at Fb. For every enter subject in an enter doc, we generate a set of key-value pairs and write them to RocksDB.

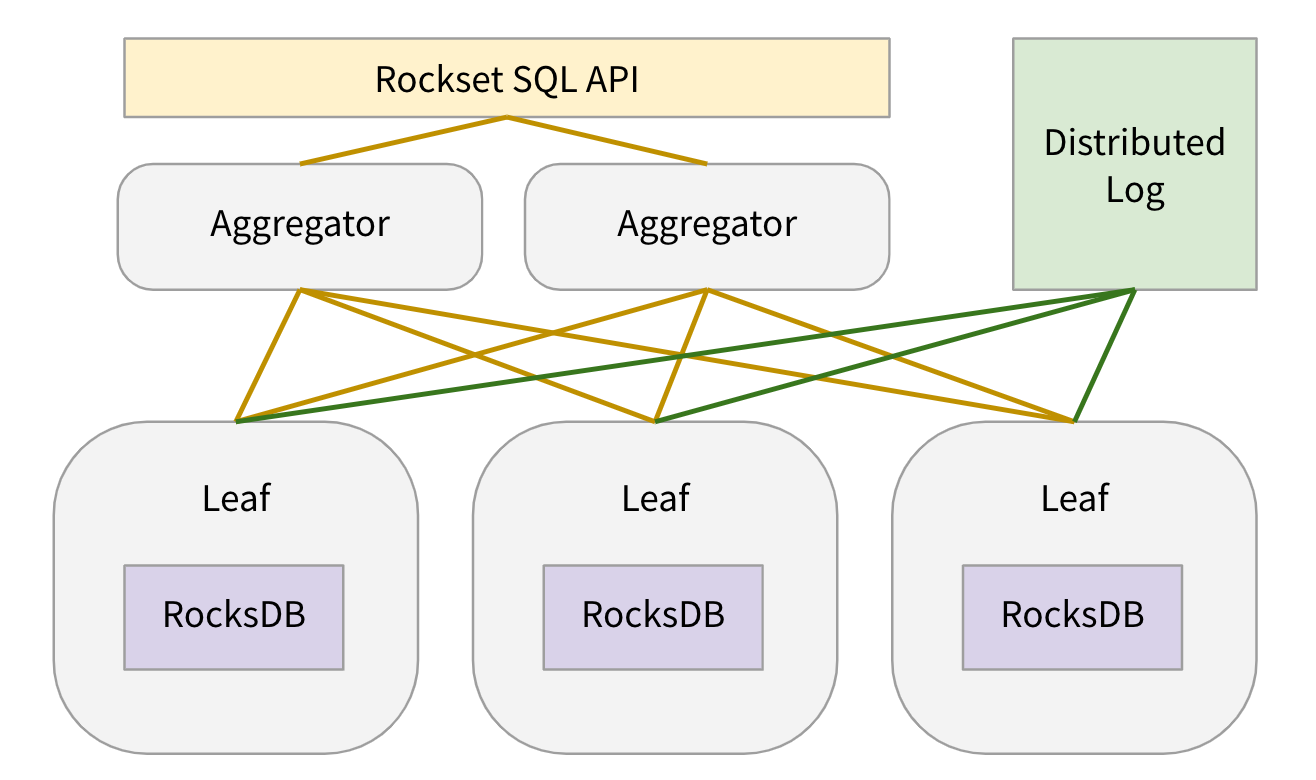

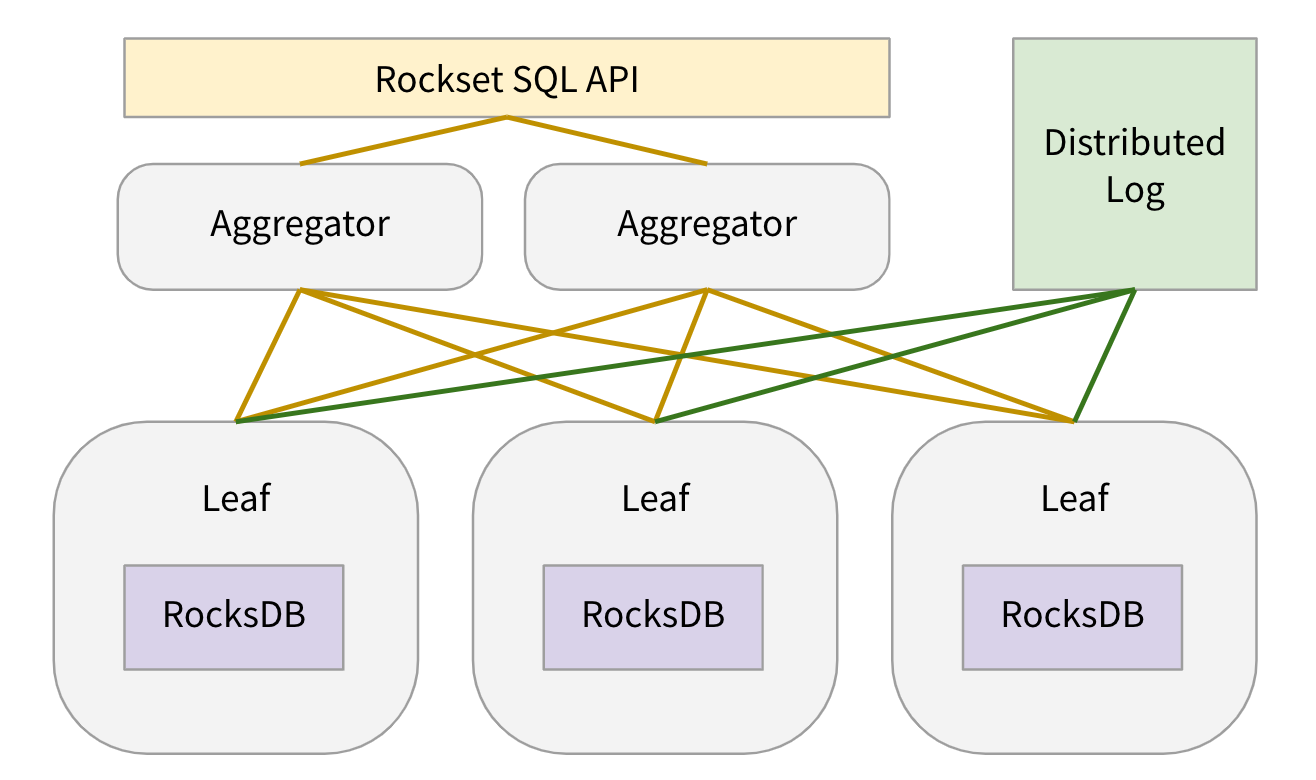

Let me rapidly describe the place the RocksDB storage nodes fall within the general system structure.

When a consumer creates a group, we internally create N shards for the gathering. Every shard is replicated k-ways (normally ok=2) to attain excessive learn availability and every shard reproduction is assigned to a leaf node. Every leaf node is assigned many shard replicas of many collections. In our manufacturing setting every leaf node has round 100 shard replicas assigned to it. Leaf nodes create 1 RocksDB occasion for every shard reproduction assigned to them. For every shard reproduction, leaf nodes constantly pull updates from a DistributedLogStore and apply the updates to the RocksDB occasion. When a question is acquired, leaf nodes are assigned question plan fragments to serve information from among the RocksDB cases assigned to them. For extra particulars on leaf nodes, please consult with Aggregator Leaf Tailer weblog or Rockset white paper.

To attain question latency of milliseconds below 1000s of qps of sustained question load per leaf node whereas constantly making use of incoming updates, we spent quite a lot of time tuning our RocksDB cases. Under, we describe how we tuned RocksDB for our use case.

RocksDB-Cloud

RocksDB is an embedded key-value retailer. The information in 1 RocksDB occasion is just not replicated to different machines. RocksDB can’t get well from machine failures. To attain sturdiness, we constructed RocksDB-Cloud. RocksDB-Cloud replicates all the info and metadata for a RocksDB occasion to S3. Thus, all SST recordsdata written by leaf nodes get replicated to S3. When a leaf node machine fails, all shard replicas on that machine get assigned to different leaf nodes. For every new shard reproduction project, a leaf node reads the RocksDB recordsdata for that shard from corresponding S3 bucket and picks up the place the failed leaf node left off.

Disable Write Forward Log

RocksDB writes all its updates to a write forward log and to the energetic in-memory memtable. The write forward log is used to get well information within the memtables within the occasion of course of restart. In our case, all of the incoming updates for a group are first written to a DistributedLogStore. The DistributedLogStore itself acts as a write forward log for the incoming updates. Additionally, we don’t want to ensure information consistency throughout queries. It’s alright to lose the info within the memtables and re-fetch it from the DistributedLogStore on restarts. Because of this, we disable RocksDB’s write forward log. Because of this all our RocksDB writes occur in-memory.

Author Fee Restrict

As talked about above, leaf nodes are chargeable for each making use of incoming updates and serving information for queries. We are able to tolerate comparatively a lot greater latency for writes than for queries. As a lot as potential, we at all times wish to use a fraction of accessible compute capability for processing writes and most of compute capability for serving queries. We restrict the variety of bytes that may be written per second to all RocksDB cases assigned to a leaf node. We additionally restrict the variety of threads used to use writes to RocksDB cases. This helps decrease the influence RocksDB writes might have on question latency. Additionally, by throttling writes on this method, we by no means find yourself with imbalanced LSM tree or set off RocksDB’s built-in unpredictable back-pressure/stall mechanism. Observe that each of those options usually are not out there in RocksDB, however we applied them on prime of RocksDB. RocksDB helps a charge limiter to throttle writes to the storage machine, however we’d like a mechanism to throttle writes from the appliance to RocksDB.

Sorted Write Batch

RocksDB can obtain greater write throughput if particular person updates are batched in a WriteBatch and additional if consecutive keys in a write batch are in a sorted order. We make the most of each of those. We batch incoming updates into micro-batches of ~100KB dimension and kind them earlier than writing them to RocksDB.

Dynamic Degree Goal Sizes

In an LSM tree with leveled compaction coverage, recordsdata from a degree don’t get compacted with recordsdata from the following degree till the goal dimension of the present degree is exceeded. And the goal dimension for every degree is computed primarily based on the desired L1 goal dimension and degree dimension multiplier (normally 10). This normally leads to greater area amplification than desired till the final degree has reached its goal dimension as described on RocksDB weblog. To alleviate this, RocksDB can dynamically set goal sizes for every degree primarily based on the present dimension of the final degree. We use this function to attain the anticipated 1.111 area amplification with RocksDB whatever the quantity of information saved within the RocksDB occasion. It may be turned on by setting AdvancedColumnFamilyOptions::level_compaction_dynamic_level_bytes to true.

Shared Block Cache

As talked about above, leaf nodes are assigned many shard replicas of many collections and there’s one RocksDB occasion for every shard reproduction. As a substitute of utilizing a separate block cache for every RocksDB occasion, we use 1 international block cache for all RocksDB cases on the leaf node. This helps obtain higher reminiscence utilization by evicting unused blocks throughout all shard replicas out of leaf reminiscence. We give block cache about 25% of the reminiscence out there on a leaf pod. We deliberately don’t make block cache even larger even when there’s spare reminiscence out there that’s not used for processing queries. It is because we would like the working system web page cache to have that spare reminiscence. Web page cache shops compressed blocks whereas block cache shops uncompressed blocks, so web page cache can extra densely pack file blocks that aren’t so scorching. As described in Optimizing Area Amplification in RocksDB paper, web page cache helped cut back file system reads by 52% for 3 RocksDB deployments noticed at Fb. And web page cache is shared by all containers on a machine, so the shared web page cache serves all leaf containers operating on a machine.

No Compression For L0 & L1

By design, L0 and L1 ranges in an LSM tree comprise little or no information in comparison with different ranges. There may be little to be gained by compressing the info in these ranges. However, we might avoid wasting cpu by not compressing information in these ranges. Each L0 to L1 compaction must entry all recordsdata in L1. Additionally, vary scans can’t use bloom filter and have to search for all recordsdata in L0. Each of those frequent cpu-intensive operations would use much less cpu if information in L0 and L1 doesn’t should be uncompressed when learn or compressed when written. That is why, and as beneficial by RocksDB staff, we don’t compress information in L0 and L1, and use LZ4 for all different ranges.

Bloom Filters On Key Prefixes

As described in converged indexing weblog, we retailer each column of each doc in RocksDB in 3 other ways and in 3 totally different key ranges. For queries, we learn every of those key ranges otherwise. Particularly, we don’t ever lookup a key in any of those key ranges utilizing the precise key. We normally merely search to a key utilizing a smaller, shared prefix of the important thing. Due to this fact, we set BlockBasedTableOptions::whole_key_filtering to false in order that complete keys usually are not used to populate and thereby pollute the bloom filters created for every SST. We additionally use a customized ColumnFamilyOptions::prefix_extractor in order that solely the helpful prefix of the secret is used for setting up the bloom filters.

Iterator Freepool

When studying information from RocksDB for processing queries, we have to create 1 or extra rocksdb::Iterators. For queries that carry out vary scans or retrieve many fields, we have to create many iterators. Our cpu profile confirmed that creating these iterators is dear. We use a freepool of those iterators and attempt to reuse iterators inside a question. We can’t reuse iterators throughout queries as every iterator refers to a selected RocksDB snapshot and we use the identical RocksDB snapshot for a question.

Lastly, right here is the complete listing of configuration parameters we specify for our RocksDB cases.

Choices.max_background_flushes: 2

Choices.max_background_compactions: 8

Choices.avoid_flush_during_shutdown: 1

Choices.compaction_readahead_size: 16384

ColumnFamilyOptions.comparator: leveldb.BytewiseComparator

ColumnFamilyOptions.table_factory: BlockBasedTable

BlockBasedTableOptions.checksum: kxxHash

BlockBasedTableOptions.block_size: 16384

BlockBasedTableOptions.filter_policy: rocksdb.BuiltinBloomFilter

BlockBasedTableOptions.whole_key_filtering: 0

BlockBasedTableOptions.format_version: 4

LRUCacheOptionsOptions.capability : 8589934592

ColumnFamilyOptions.write_buffer_size: 134217728

ColumnFamilyOptions.compression[0]: NoCompression

ColumnFamilyOptions.compression[1]: NoCompression

ColumnFamilyOptions.compression[2]: LZ4

ColumnFamilyOptions.prefix_extractor: CustomPrefixExtractor

ColumnFamilyOptions.compression_opts.max_dict_bytes: 32768

[ad_2]